各位好,最近剛接觸,還不是很清楚,問題很奇怪的話請見諒 (ง๑ •̀_•́)ง

我使用 keras 裡的數據來練習

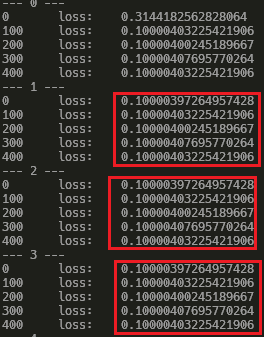

可是發現我的 loss 不知道為什麼維持不變,看不出來哪裡怪怪的

從 epoch 1 開始就沒再改變過了

請問要怎麼修改才會比較正常?

謝謝

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import datasets

# x: (60000, 28, 28)

# y: (60000,)

(x, y), _ = tf.keras.datasets.mnist.load_data()

# 轉換成 tensor

x = tf.convert_to_tensor(x, dtype=tf.float32)/255 # 讓值範圍在 0 ~ 1

y = tf.convert_to_tensor(y, dtype=tf.int32)

train_db = tf.data.Dataset.from_tensor_slices((x, y)).batch(128)

# [b, 784] -> [b, 256] -> [b, 128] -> [b, 10]

w1 = tf.Variable(tf.random.truncated_normal([784, 256], stddev=0.1))

b1 = tf.Variable(tf.zeros([256]))

w2 = tf.Variable(tf.random.truncated_normal([256, 128], stddev=0.1))

b2 = tf.Variable(tf.zeros([128]))

w3 = tf.Variable(tf.random.truncated_normal([128, 10], stddev=0.1))

b3 = tf.Variable(tf.zeros([10]))

lr = 1e-3

for epoch in range(10):

print('---', epoch, '---')

for step, (x, y) in enumerate(train_db):

# x [128, 28, 28]

# y [128]

x = tf.reshape(x, [-1, 28*28]) # [128, 784]

with tf.GradientTape() as tape: # tf.variable

h1 = x@w1+b1

h1 = tf.nn.relu(h1)

h2 = h1@w2+b2

h2 = tf.nn.relu(h2)

y_out = h2@w3+b3

# compute loss

y_onehot = tf.one_hot(y, depth=10)

loss = tf.reduce_mean(tf.square(y_onehot-y_out))

# compute gradient

grads = tape.gradient(loss, [w1, b1, w2, b2, w3, b3])

# update

w1.assign_sub(w1 - lr*grads[0])

b1.assign_sub(b1 - lr*grads[1])

w2.assign_sub(w2 - lr*grads[2])

b2.assign_sub(b2 - lr*grads[3])

w3.assign_sub(w3 - lr*grads[4])

b3.assign_sub(b3 - lr*grads[5])

if step%100 == 0:

print(step, '\tloss:\t', float(loss))

2019-2020年有摸過keras,那時keras還沒有整合在tensorflow裡面,所以我給的建議都是從pytorch來的,參考就好

參考資料:

Keras with MNIST

學習資源:

tensorflow的免費學習資源(tf2.0)

https://youtu.be/hvgnX1gbsLA

https://github.com/lyhue1991/eat_tensorflow2_in_30_days

https://machinelearningmastery.com/how-to-develop-a-cnn-from-scratch-for-fashion-mnist-clothing-classification/

下面連接都是AMAZON佔據排行榜多年的ML/DL參考書,選一套看就好

想學ML+DL就買下面這套

最後我想說

from tensorflow import keras as torch