因為之後要用到,今天就簡單閱讀了這個,

投影片和內容都是來自台大李弘毅教授的youtube

https://www.youtube.com/watch?v=gmsMY5kc-zw&ab_channel=Hung-yiLee

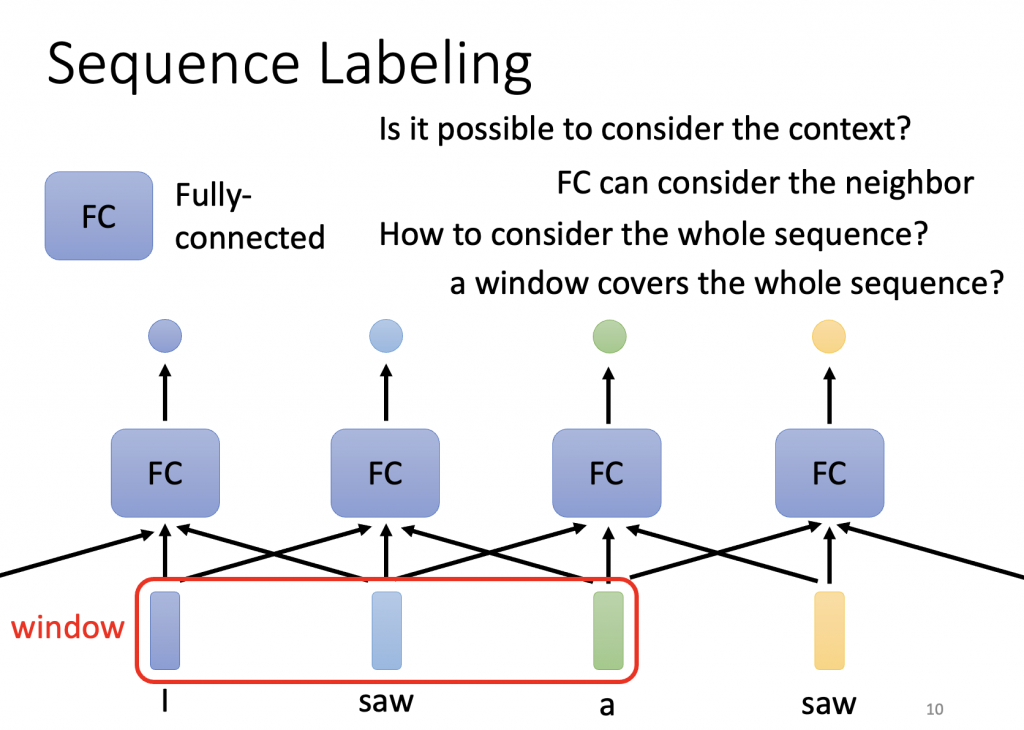

先介紹output為每個vector皆有label- Sequence Labeling

給Fully-connected network整個window的資料

但因window沒辦法cover整個sequence

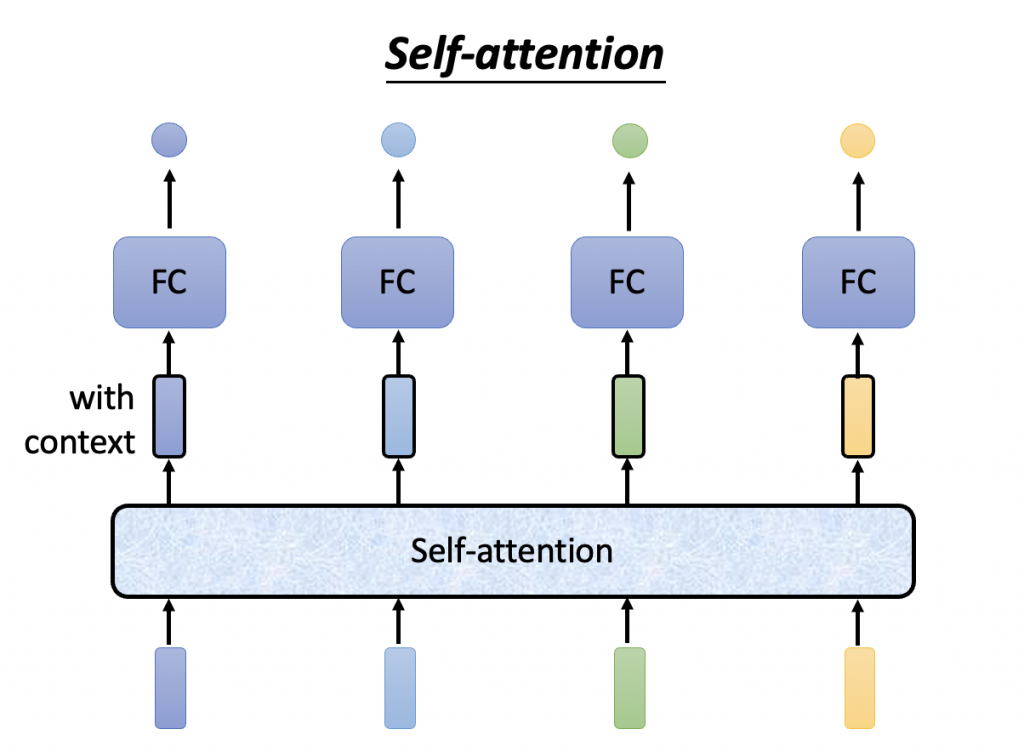

所以如要選取整個,使用self-attention

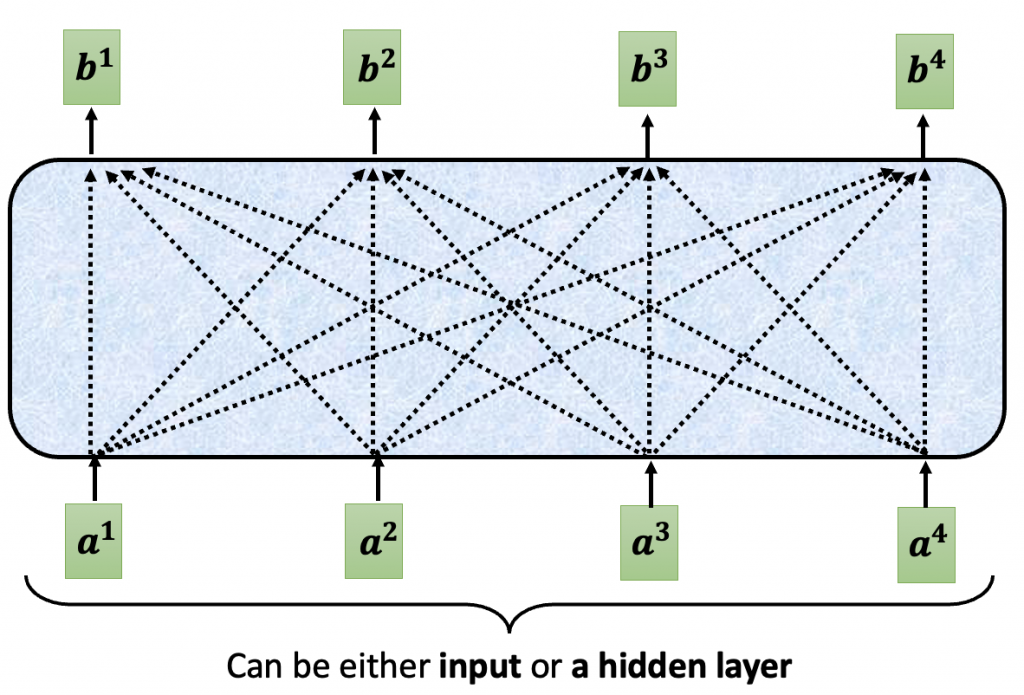

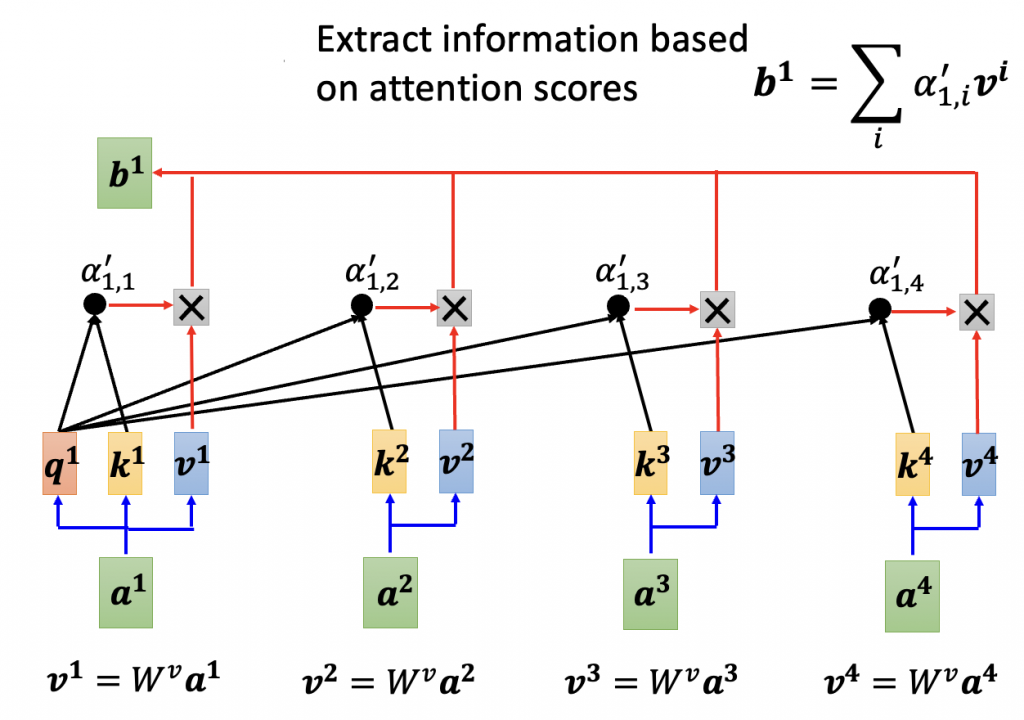

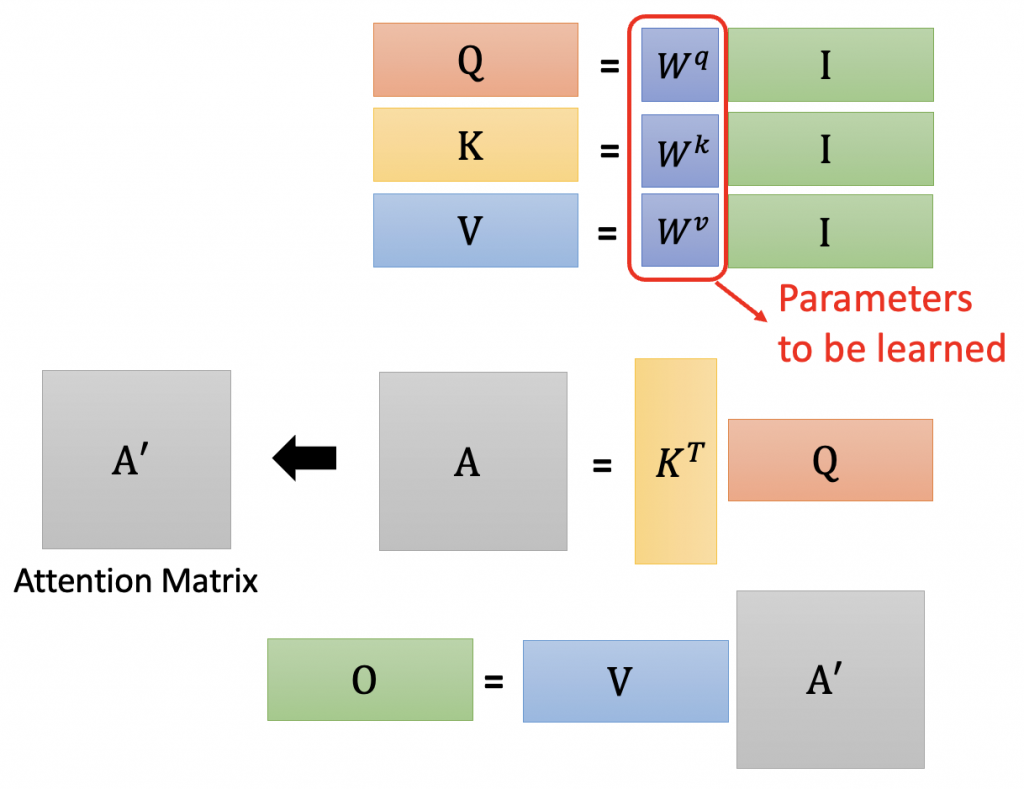

Self-attention會吃一整個sequence 的資訊,輸入幾個vector就輸出幾個

Input:一串vector

Output:考慮了整個input sequence才產生的

B1到b4是同時被計算出來的

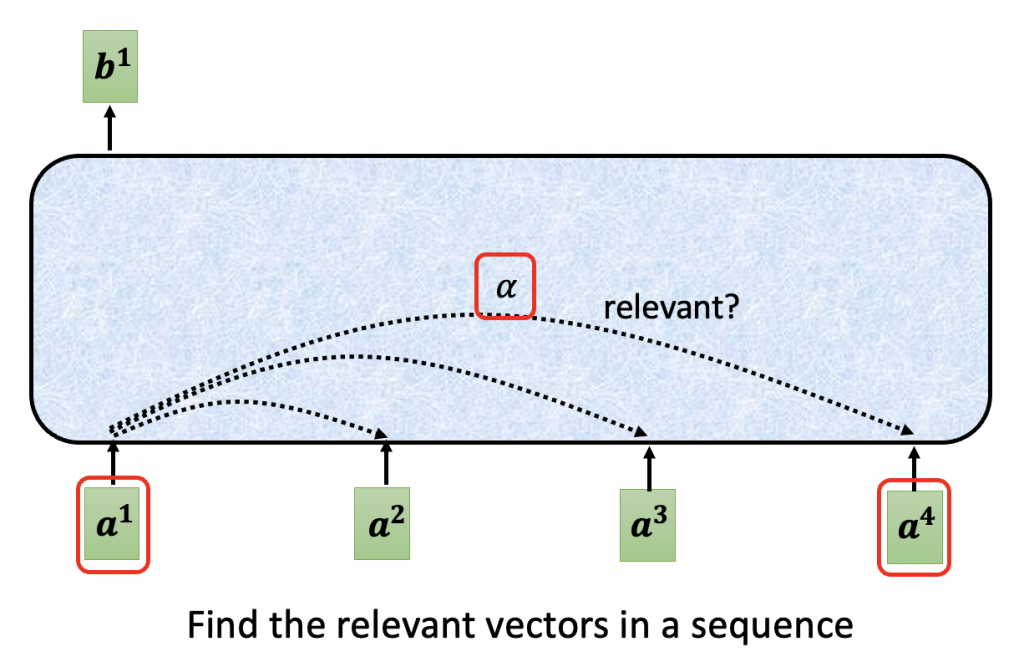

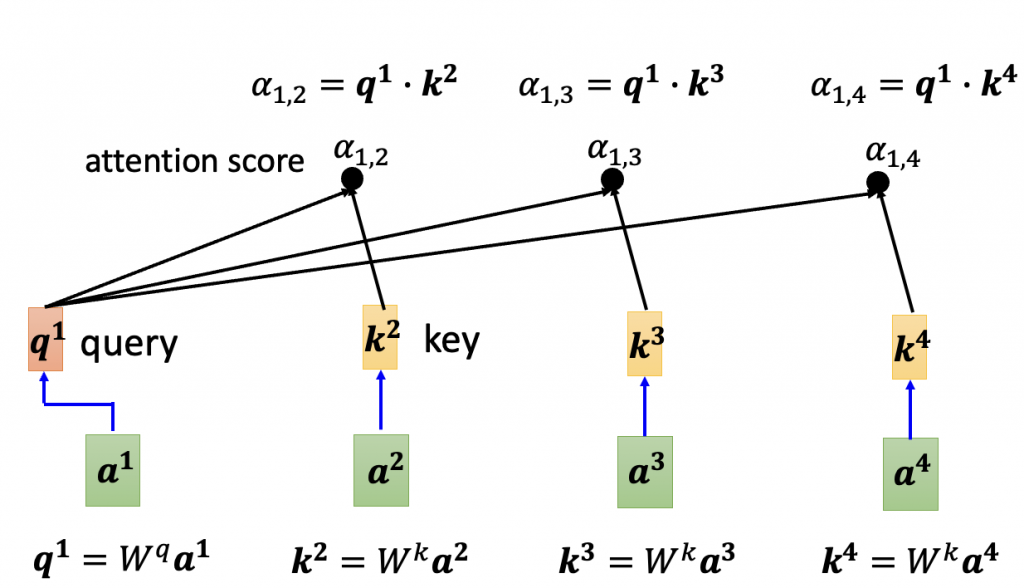

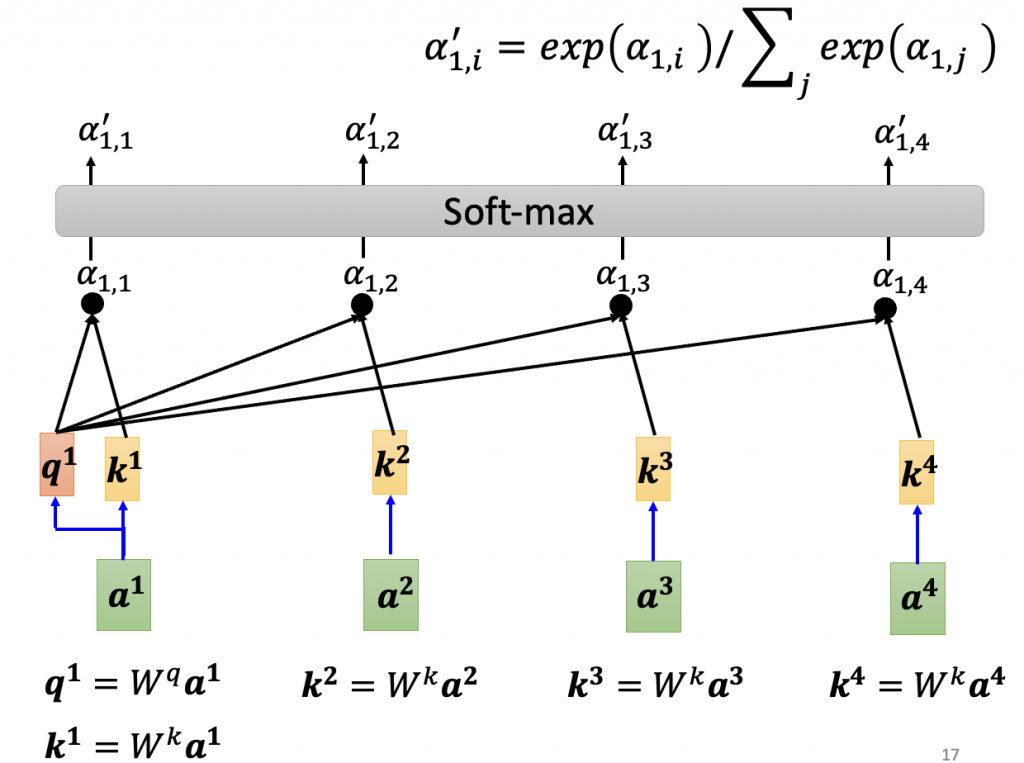

?:a1和其他input的關聯度,also called Attention score

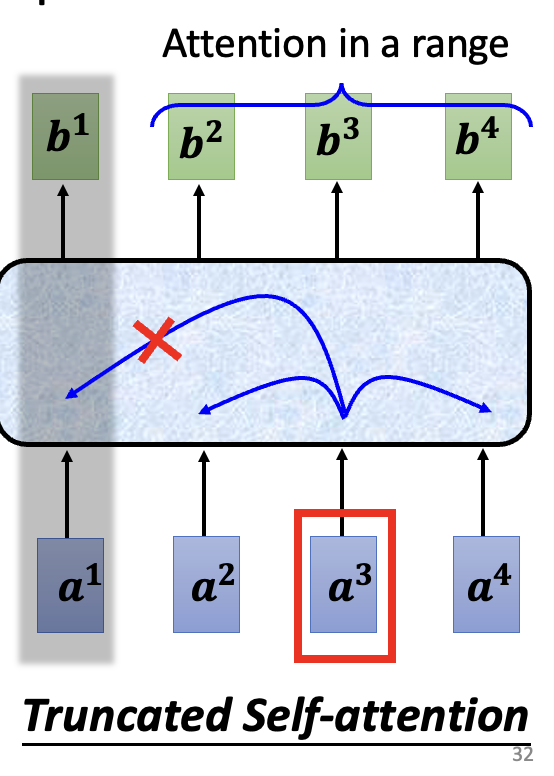

Truncated self-attention:不看一整句話,只看一小個範圍就好(人設定範圍大小)(ex:只看前後),加快運算的速度

Self-attention is the complex version of CNN.

Cuz CNN only attends in a receptive field(範圍由機器network 決定的)