今天使用PaliGemma沒有想像的順利,但還是把過程記錄一下

我試paligemma-3b-pt-224雖然沒有問題,但可能因為模型太小,回答的非常不好,可能就如昨天提到的,PaliGemma 2 需要視任務再微調會較佳。(但也太不好了,我需要再測試瞭解原因)

我改用HuggingFace的範例,模型用paligemma-3b-mix-224

from huggingface_hub import notebook_login

notebook_login()

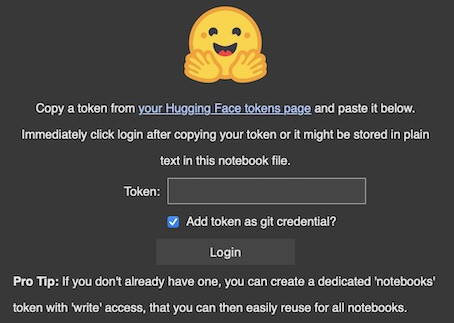

執行後會出現這個畫面,再依上面的指示,點進hugging face 網站建立 token

from transformers import AutoProcessor, PaliGemmaForConditionalGeneration

from PIL import Image

import requests

import torch

model_id = "google/paligemma-3b-mix-224"

model = PaliGemmaForConditionalGeneration.from_pretrained(model_id).eval()

processor = AutoProcessor.from_pretrained(model_id)

url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/tasks/car.jpg?download=true"

image = Image.open(requests.get(url, stream=True).raw)

plt.imshow(image)

plt.axis("off")

plt.show()

# Instruct the model to create a caption in Spanish

prompt = "caption es"

model_inputs = processor(text=prompt, images=image, return_tensors="pt")

input_len = model_inputs["input_ids"].shape[-1]

with torch.inference_mode():

generation = model.generate(**model_inputs, max_new_tokens=100, do_sample=False)

generation = generation[0][input_len:]

decoded = processor.decode(generation, skip_special_tokens=True)

print(decoded)

但最後一個cell 跑了很久很久....